Appearance

K8S 安装

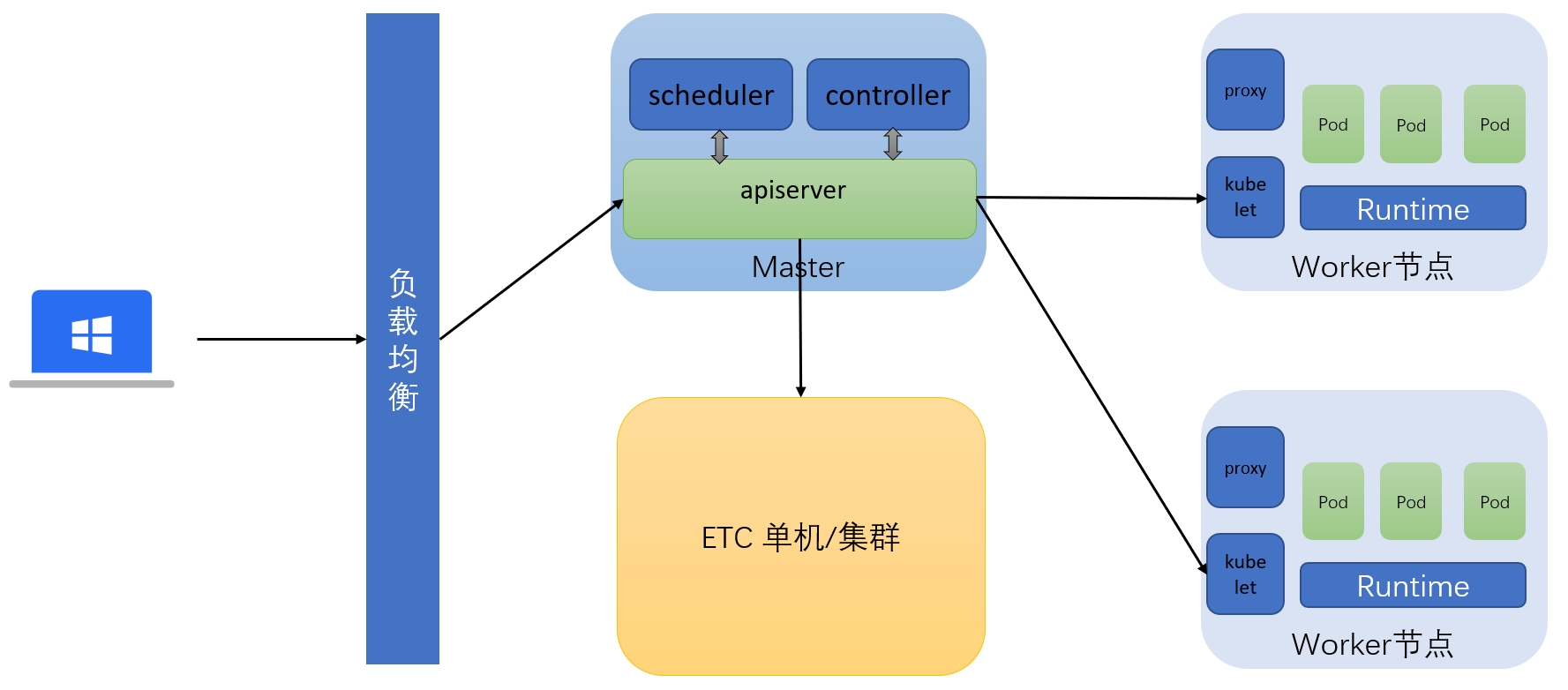

Kubernetes高可用集群二进制部署,不多介绍巴拉巴拉的了,相信大家都有所了解了

部署LVS+Keepalived 做高可用负载,请参阅文章:LVS+Keepalived高可用

通过百度网盘下载

1. 集群架构

三台均安装ETCD做集群

| 主机角色 | IP | 软件列表 |

|---|---|---|

| Master01 | 172.16.1.11 | kube-apiserver、kube-controller-manager、kube-scheduler |

| Worker01 | 172.16.1.21 | kubelet、kube-proxy、Containerd、runc |

| Worker02 | 172.16.1.22 | kubelet、kube-proxy、Containerd、runc |

网络分配

| 网络名称 | 网段 |

|---|---|

| Node网络 | 172.16.1.0/24 |

| Service网络 | 10.96.0.0/16 |

| Pod网络 | 10.244.0.0/16 |

2. 节点初始化

2.1 所有节点

关闭防火墙: systemctl disable --now firewalld

关闭selinux: sed -ri 's/SELINUX=enforcing/SELINUX=disabled/' /etc/selinux/config

关闭交换分区

bash

swapoff -a # 临时关闭

sed -ri 's/.*swap.*/#&/' /etc/fstab # 永久关闭设置环境变量date命令显示时间为24小时 vi /etc/environment [可选]

bash

export LC_TIME=POSIX # 临时生效

echo "LC_TIME=POSIX" >> /etc/environment #永久生效配置ntp服务器

shell

vi /etc/chrony.conf

pool ntp1.aliyun.com iburst # 配置文件中添加这一行

systemctl restart chronyd # 重启服务

# 其他命令

chronyc -a makestep # 立即同步时间

chronyc tracking # 检查当前时间和 NTP 服务器的时间偏差

chronyc sources -v # 查看 NTP 服务器列表及其状态shell

yum -y install ntpdate # 安装软件

crontab -e # 制定时间同步计划任务

0 */1 * * * ntpdate ntp1.aliyun.com

crontab -l # 查看时间同步计划任务limit优化

bash

ulimit -SHn 65535

cat <<EOF >> /etc/security/limits.conf

soft nofile 655360

hard nofile 131072

soft nproc 655350

hard nproc 655350

soft memlock unlimited

hard memlock unlimited

EOFLinux内核配置

bash

cat <<EOF >> /etc/sysctl.d/k8s.conf

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

fs.may_detach_mounts = 1

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_watches=89100

fs.file-max=52706963

fs.nr_open=52706963

net.netfilter.nf_conntrack_max=2310720

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp_keepalive_probes = 3

net.ipv4.tcp_keepalive_intvl =15

net.ipv4.tcp_max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp_max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.ip_conntrack_max = 131072

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.tcp_timestamps = 0

net.core.somaxconn = 16384

EOF可选工具安装(个人推荐进安装这些 )

shell

yum install wget jq psmisc vim net-tools telnet yum-utils lvm2 lrzsz tar unzip ipvsadm ipset sysstat conntrack libseccomp -y重启

3. SSL证书

由于部署时多次涉及到ssl证书生成,为了方便我编写了一个使用openssl自动生成的脚本,复制一键生成所有证书,只需要修改开头部分即可

bash

#!/bin/bash -x

days=3650

etcd_ip="

IP.1 = 127.0.0.1

IP.2 = 172.16.1.11

IP.3 = 172.16.1.21

IP.4 = 172.16.1.22

"

k8s_ip="

IP.1 = 127.0.0.1

IP.2 = 10.96.0.1

IP.3 = 172.16.1.11

IP.4 = 172.16.1.12

IP.5 = 172.16.1.13

IP.6 = 172.16.1.14

IP.7 = 172.16.1.15

IP.8 = 172.16.1.16

IP.9 = 172.16.1.17

IP.10 = 172.16.1.18

IP.11 = 172.16.1.200

"

echo "欢迎使用SSL证书生成脚本!"

echo "请输入需要操作的选项:

1. 生成 etcd 证书

2. 生成 k8s CA 证书

3. 生成 kube-apiserver 证书

4. 生成 kubectl 证书

5. 生成 kube-controller-manager 证书

6. 生成 kube-scheduler 证书

7. 生成 bube-proxy 证书

8. 生成所有证书

9. 其他任意键退出"

read name

create_etcd_ca(){

cat > openssl.conf << EOF

[req]

req_extensions = v3_req

distinguished_name = req_distinguished_name

[req_distinguished_name]

[ v3_req ]

keyUsage = critical, digitalSignature, keyEncipherment

extendedKeyUsage = serverAuth, clientAuth

subjectAltName = @alt_names

[alt_names]

DNS.1 = etcd1

DNS.2 = etcd2

DNS.3 = etcd3

${etcd_ip}

EOF

# 准备 CA 证书

[ -f etcd-ca.key ] || openssl genrsa -out etcd-ca.key 2048

[ -f etcd-ca.crt ] || openssl req -x509 -new -nodes -key etcd-ca.key -subj "/CN=etcd-ca" -days ${days} -out etcd-ca.crt

# 创建 etcd client 证书

[ -f etcd-client.key ] || openssl genrsa -out etcd-client.key 2048

[ -f etcd-client.csr ] || openssl req -new -key etcd-client.key -subj "/CN=etcd-client" -out etcd-client.csr -config openssl.conf

[ -f etcd-client.crt ] || openssl x509 -req -sha256 -in etcd-client.csr -CA etcd-ca.crt -CAkey etcd-ca.key -CAcreateserial -out etcd-client.crt -days ${days} -extensions v3_req -extfile openssl.conf

# 创建 etcd 集群 peer 间证书

[ -f etcd-peer.key ] || openssl genrsa -out etcd-peer.key 2048

[ -f etcd-peer.csr ] || openssl req -new -key etcd-peer.key -subj "/CN=etcd-peer" -out etcd-peer.csr -config openssl.conf

[ -f etcd-peer.crt ] || openssl x509 -req -sha256 -in etcd-peer.csr -CA etcd-ca.crt -CAkey etcd-ca.key -CAcreateserial -out etcd-peer.crt -days ${days} -extensions v3_req -extfile openssl.conf

rm -f openssl.conf

}

create_k8s_ca(){

[ -f ca.key ] || openssl genrsa -out ca.key 2048

[ -f ca.crt ] || openssl req -x509 -new -nodes -key ca.key -subj "/CN=k8s-ca" -days ${days} -out ca.crt

}

kube_apiserver(){

cat > openssl.conf << EOF

[req]

default_bits = 2048

prompt = no

default_md = sha256

req_extensions = v3_req

distinguished_name = dn

[ dn ]

C = CN

ST = AH

L = CZ

O = moujun

OU = it

[alt_names]

DNS.1 = kubernetes

DNS.2 = kubernetes.default

DNS.3 = kubernetes.default.svc

DNS.4 = kubernetes.default.svc.cluster

DNS.5 = kubernetes.default.svc.cluster.local

${k8s_ip}

[ v3_req ]

keyUsage=keyEncipherment,dataEncipherment

extendedKeyUsage=serverAuth,clientAuth

subjectAltName=@alt_names

EOF

# 创建 k8s kube-apiserver证书

[ -f kube-apiserver.key ] || openssl genrsa -out kube-apiserver.key 2048

[ -f kube-apiserver.csr ] || openssl req -new -key kube-apiserver.key -subj "/CN=k8s-kube-apiserver" -out kube-apiserver.csr -config openssl.conf

[ -f kube-apiserver.crt ] || openssl x509 -req -sha256 -in kube-apiserver.csr -CA ca.crt -CAkey ca.key -CAcreateserial -out kube-apiserver.crt -days ${days} -extensions v3_req -extfile openssl.conf -sha256

rm -f openssl.conf

}

kubectl(){

cat > openssl.conf << EOF

[req]

default_bits = 2048

prompt = no

default_md = sha256

req_extensions = v3_req

distinguished_name = dn

[ dn ]

C = CN

ST = AH

L = CZ

O = system:masters

OU = system

CN = admin

[ v3_req ]

keyUsage=keyEncipherment,dataEncipherment

extendedKeyUsage=serverAuth,clientAuth

EOF

# 创建 k8s kubectl-admin 证书

[ -f kubectl-admin.key ] || openssl genrsa -out kubectl-admin.key 2048

[ -f kubectl-admin.csr ] || openssl req -new -key kubectl-admin.key -out kubectl-admin.csr -config openssl.conf -subj "/CN=admin/O=system:masters/OU=System"

[ -f kubectl-admin.crt ] || openssl x509 -req -sha256 -in kubectl-admin.csr -CA ca.crt -CAkey ca.key -CAcreateserial -out kubectl-admin.crt -days ${days} -extensions v3_req -extfile openssl.conf -sha256

rm -f openssl.conf

}

kube_controller_manager(){

cat > openssl.conf << EOF

[req]

default_bits = 2048

prompt = no

default_md = sha256

req_extensions = v3_req

distinguished_name = dn

[ dn ]

C = CN

ST = AH

L = CZ

O = system:kube-controller-manager

OU = system

CN = system:kube-controller-manager

[alt_names]

${k8s_ip}

[ v3_req ]

keyUsage=keyEncipherment,dataEncipherment

extendedKeyUsage=serverAuth,clientAuth

subjectAltName=@alt_names

EOF

# 创建 k8s kube-controller-manager 证书

[ -f kube-controller-manager.key ] || openssl genrsa -out kube-controller-manager.key 2048

[ -f kube-controller-manager.csr ] || openssl req -new -key kube-controller-manager.key -out kube-controller-manager.csr -config openssl.conf -subj "/CN=system:kube-controller-manager/O=system:masters/OU=System"

[ -f kube-controller-manager.crt ] || openssl x509 -req -sha256 -in kube-controller-manager.csr -CA ca.crt -CAkey ca.key -CAcreateserial -out kube-controller-manager.crt -days ${days} -extensions v3_req -extfile openssl.conf -sha256

rm -f openssl.conf

}

kube_scheduler(){

cat > openssl.conf << EOF

[req]

default_bits = 2048

prompt = no

default_md = sha256

req_extensions = v3_req

distinguished_name = dn

[ dn ]

C = CN

ST = AH

L = CZ

O = system:kube-scheduler

OU = system

CN = system:kube-scheduler

[alt_names]

${k8s_ip}

[ v3_req ]

keyUsage=keyEncipherment,dataEncipherment

extendedKeyUsage=serverAuth,clientAuth

subjectAltName=@alt_names

EOF

# 创建 证书

[ -f kube-scheduler.key ] || openssl genrsa -out kube-scheduler.key 2048

[ -f kube-scheduler.csr ] || openssl req -new -key kube-scheduler.key -out kube-scheduler.csr -config openssl.conf -subj "/CN=system:kube-scheduler/O=system:kube-scheduler/OU=System"

[ -f kube-scheduler.crt ] || openssl x509 -req -sha256 -in kube-scheduler.csr -CA ca.crt -CAkey ca.key -CAcreateserial -out kube-scheduler.crt -days ${days} -extensions v3_req -extfile openssl.conf -sha256

rm -f openssl.conf

}

bube_proxy(){

cat > openssl.conf << EOF

[req]

default_bits = 2048

prompt = no

default_md = sha256

req_extensions = v3_req

distinguished_name = dn

[ dn ]

C = CN

ST = AH

L = CZ

O = moujun

OU = IT

CN = system:kube-proxy

[ v3_req ]

keyUsage=keyEncipherment,dataEncipherment

extendedKeyUsage=serverAuth,clientAuth

EOF

# 创建 k8s apiserver证书

[ -f kube-proxy.key ] || openssl genrsa -out kube-proxy.key 2048

[ -f kube-proxy.csr ] || openssl req -new -key kube-proxy.key -out kube-proxy.csr -config openssl.conf -subj "/CN=system:kube-proxy"

[ -f kube-proxy.crt ] || openssl x509 -req -sha256 -in kube-proxy.csr -CA ca.crt -CAkey ca.key -CAcreateserial -out kube-proxy.crt -days ${days} -extensions v3_req -extfile openssl.conf -sha256

rm -f openssl.conf

}

if [ ${name} = "1" ]

then

create_etcd_ca

elif [ ${name} = "2" ]

then

create_k8s_ca

elif [ ${name} = "3" ]

then

kube_apiserver

elif [ ${name} = "4" ]

then

kubectl

elif [ ${name} = "5" ]

then

kube_controller_manager

elif [ ${name} = "6" ]

then

kube_scheduler

elif [ ${name} = "7" ]

then

bube_proxy

elif [ ${name} = "8" ]

then

create_etcd_ca

create_k8s_ca

kube_apiserver

kubectl

kube_controller_manager

kube_scheduler

bube_proxy

else

echo "退出"

fi4. ETCD 部署

etcd 是一种强一致性的分布式键值存储,它提供了一种可靠的方法来存储需要由分布式系统或机器集群访问的数据。它可以在网络分区期间正常处理 leader 选举,并且可以容忍计算机故障,即使在 leader 节点中也是如此

etcd: 分布式协调服务,如服务发现、配置管理、锁服务 存储需要强一致性、高可用性和并发读写的关键数据 Redis: 缓存服务,加速数据库或其他慢速存储的访问 会话管理,存储用户会话信息和状态 实时分析,存储和处理流数据

4.1 下载安装包

可以在官方下载ETCD安装包

bash

wget https://github.com/etcd-io/etcd/releases/download/v3.5.16/etcd-v3.5.16-linux-amd64.tar.gz解压安装

bash

tar -xvf etcd-v3.5.16-linux-amd64.tar.gz

cp -p etcd-v3.5.16-linux-amd64/etcd* /usr/local/bin/

# 直接scp拷贝到其他两台服务器

scp etcd-v3.5.16-linux-amd64/etcd* root@172.16.1.12:/usr/local/bin/

scp etcd-v3.5.16-linux-amd64/etcd* root@172.16.1.13:/usr/local/bin/4.3 证书同步到其他节点

证书同步到其他节点

bash

scp etcd* root@172.16.1.21:/etc/kubernetes/ssl/

scp etcd* root@172.16.1.22:/etc/kubernetes/ssl/4.3 创建相关配置文件

bash

# 保存SSL证书的目录

mkdir -p /etc/kubernetes/ssl

# 集群数据存储目录

mkdir -p /var/lib/etcd/default.etcd创建ETCD配置文件 /etc/etcd/conf.yaml

bash

cat > /etc/etcd/conf.yaml << "EOF"

name: etcd1

data-dir: /var/lib/etcd/default.etcd

listen-peer-urls: https://172.16.1.11:2380

listen-client-urls: https://172.16.1.11:2379,https://127.0.0.1:2379

initial-advertise-peer-urls: https://172.16.1.11:2380

advertise-client-url: https://172.16.1.11:2379

initial-cluster: etcd1=https://172.16.1.11:2380,etcd2=https://172.16.1.21:2380,etcd3=https://172.16.1.22:2380

initial-cluster-token: etcd-cluster

initial-cluster-state: new

# client节点通信 证书配置

client-transport-security:

cert-file: /etc/kubernetes/ssl/etcd-client.crt

key-file: /etc/kubernetes/ssl/etcd-client.key

client-cert-auth: true

trusted-ca-file: /etc/kubernetes/ssl/etcd-ca.crt

# 集群peer节点间通信 证书配置

peer-transport-security:

cert-file: /etc/kubernetes/ssl/etcd-peer.crt

key-file: /etc/kubernetes/ssl/etcd-peer.key

client-cert-auth: false

trusted-ca-file: /etc/kubernetes/ssl/etcd-ca.crt

auto-tls: false

EOFbash

cat > /etc/etcd/conf.yaml <<"EOF"

name: etcd2

data-dir: /var/lib/etcd/default.etcd

listen-peer-urls: https://172.16.1.21:2380

listen-client-urls: https://172.16.1.21:2379,https://127.0.0.1:2379

initial-advertise-peer-urls: https://172.16.1.21:2380

advertise-client-url: https://172.16.1.21:2379

initial-cluster: etcd1=https://172.16.1.11:2380,etcd2=https://172.16.1.21:2380,etcd3=https://172.16.1.22:2380

initial-cluster-token: etcd-cluster

initial-cluster-state: new

# client节点通信 证书配置

client-transport-security:

cert-file: /etc/kubernetes/ssl/etcd-client.crt

key-file: /etc/kubernetes/ssl/etcd-client.key

client-cert-auth: true

trusted-ca-file: /etc/kubernetes/ssl/etcd-ca.crt

# 集群peer节点间通信 证书配置

peer-transport-security:

cert-file: /etc/kubernetes/ssl/etcd-peer.crt

key-file: /etc/kubernetes/ssl/etcd-peer.key

client-cert-auth: false

trusted-ca-file: /etc/kubernetes/ssl/etcd-ca.crt

auto-tls: false

EOFbash

cat > /etc/etcd/conf.yaml <<"EOF"

name: etcd3

data-dir: /var/lib/etcd/default.etcd

listen-peer-urls: https://172.16.1.22:2380

listen-client-urls: https://172.16.1.22:2379,https://127.0.0.1:2379

initial-advertise-peer-urls: https://172.16.1.22:2380

advertise-client-url: https://172.16.1.22:2379

initial-cluster: etcd1=https://172.16.1.11:2380,etcd2=https://172.16.1.21:2380,etcd3=https://172.16.1.22:2380

initial-cluster-token: etcd-cluster

initial-cluster-state: new

# client节点通信 证书配置

client-transport-security:

cert-file: /etc/kubernetes/ssl/etcd-client.crt

key-file: /etc/kubernetes/ssl/etcd-client.key

client-cert-auth: true

trusted-ca-file: /etc/kubernetes/ssl/etcd-ca.crt

# 集群peer节点间通信 证书配置

peer-transport-security:

cert-file: /etc/kubernetes/ssl/etcd-peer.crt

key-file: /etc/kubernetes/ssl/etcd-peer.key

client-cert-auth: false

trusted-ca-file: /etc/kubernetes/ssl/etcd-ca.crt

auto-tls: false

EOF4.4 创建etcd服务

bash

cat > /etc/systemd/system/etcd.service <<"EOF"

[Unit]

Description=Etcd Server

After=network.target

[Service]

User=root

WorkingDirectory=/var/lib/etcd/

ExecStart=/usr/local/bin/etcd --config-file /etc/etcd/conf.yaml

Restart=on-failure

LimitNOFILE=1048576

RestartSec=5

StartLimitIntervalSec=10

StartLimitBurst=5

[Install]

WantedBy=multi-user.target

EOF4.6 启动etcd集群

bash

systemctl daemon-reload

systemctl enable --now etcd.service

systemctl status etcd

systemctl restart etcd检查集群状态

bash

# 无ssl

ETCDCTL_API=3 /usr/local/bin/etcdctl --write-out=table --endpoints=http://172.16.1.11:2379,http://172.16.1.12:2379,http://172.16.1.13:2379 endpoint health

# 有ssl

ETCDCTL_API=3 etcdctl --cacert=/etc/kubernetes/ssl/etcd-ca.crt --cert=/etc/kubernetes/ssl/etcd-client.crt --key=/etc/kubernetes/ssl/etcd-client.key --endpoints=https://172.16.1.11:2379,https://172.16.1.21:2379,https://172.16.1.22:2379 endpoint health5.Master部署

5.1 apiserver部署

bash

# 官方按需下载地址:https://kubernetes.io/zh-cn/releases/download/

wget https://dl.k8s.io/v1.31.1/kubernetes-server-linux-amd64.tar.gz创建TLS机制所需TOKEN TLS Bootstraping:Master apiserver启用TLS认证后,Node节点kubelet和kube-proxy与kube-apiserver进行通信,必须使用CA签发的有效证书才可以,当Node节点很多时,这种客户端证书颁发需要大量工作,同样也会增加集群扩展复杂度。为了简化流程,Kubernetes引入了TLS bootstraping机制来自动颁发客户端证书,kubelet会以一个低权限用户自动向apiserver申请证书,kubelet的证书由apiserver动态签署。所以强烈建议在Node上使用这种方式,目前主要用于kubelet,kube-proxy还是由我们统一颁发一个证书。

bash

cat > token.csv << EOF

$(head -c 16 /dev/urandom | od -An -t x | tr -d ' '),kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF创建apiserver服务环境变量文件

bash

cat > /etc/kubernetes/kube-apiserver.conf << "EOF"

KUBE_APISERVER_OPTS="--enable-admission-plugins=NamespaceLifecycle,NodeRestriction,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota \

--anonymous-auth=false \

--bind-address=172.16.1.11 \

--secure-port=6443 \

--advertise-address=172.16.1.11 \

--authorization-mode=Node,RBAC \

--runtime-config=api/all=true \

--enable-bootstrap-token-auth \

--service-cluster-ip-range=10.96.0.0/16 \

--token-auth-file=/etc/kubernetes/ssl/token.csv \

--service-node-port-range=30000-32767 \

--tls-cert-file=/etc/kubernetes/ssl/kube-apiserver.crt \

--tls-private-key-file=/etc/kubernetes/ssl/kube-apiserver.key \

--client-ca-file=/etc/kubernetes/ssl/ca.crt \

--kubelet-client-certificate=/etc/kubernetes/ssl/kube-apiserver.crt \

--kubelet-client-key=/etc/kubernetes/ssl/kube-apiserver.key \

--service-account-key-file=/etc/kubernetes/ssl/ca.key \

--service-account-signing-key-file=/etc/kubernetes/ssl/ca.key \

--service-account-issuer=api \

--etcd-cafile=/etc/kubernetes/ssl/etcd-ca.crt \

--etcd-certfile=/etc/kubernetes/ssl/etcd-client.crt \

--etcd-keyfile=/etc/kubernetes/ssl/etcd-client.key \

--etcd-servers=https://172.16.1.11:2379,https://172.16.1.21:2379,https://172.16.1.22:2379 \

--allow-privileged=true \

--apiserver-count=3 \

--audit-log-maxage=30 \

--audit-log-maxbackup=3 \

--audit-log-maxsize=100 \

--audit-log-path=/var/log/kube-apiserver-audit.log \

--event-ttl=1h \

--v=4"

EOFini

cat > /etc/systemd/system/kube-apiserver.service <<"EOF"

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=etcd.service

Wants=etcd.service

[Service]

EnvironmentFile=-/etc/kubernetes/kube-apiserver.conf

ExecStart=/usr/local/bin/kube-apiserver $KUBE_APISERVER_OPTS

Restart=on-failure

RestartSec=5

Type=notify

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF启动服务

bash

systemctl daemon-reload

systemctl enable --now kube-apiserver.service

systemctl status kube-apiserver.service

systemctl restart kube-apiserver.service5.2 kubectl 部署

bash

kubectl config set-cluster kubernetes --certificate-authority=ca.crt --embed-certs=true --server=https://172.16.1.11:6443 --kubeconfig=kube.config

kubectl config set-credentials admin --client-certificate=kubectl-admin.crt --client-key=kubectl-admin.key --embed-certs=true --kubeconfig=kube.config

kubectl config set-context kubernetes --cluster=kubernetes --user=admin --kubeconfig=kube.config

kubectl config use-context kubernetes --kubeconfig=kube.configbash

mkdir /root/.kube/

cp -f kube.config ~/.kube/config

kubectl create clusterrolebinding kube-apiserver:kubelet-apis --clusterrole=system:kubelet-api-admin --user kubernetes --kubeconfig=/root/.kube/config将/root/.kube/config复制到需要执行kubectl的机器上即可

连接验证

bash

# 查看集群信息

kubectl cluster-info

# 查看集群组件状态

kubectl get componentstatuses

# 查看命名空间中资源对象

kubectl get all --all-namespaces5.3 kube-controller-manager部署

master节点

创建证书

bash

kubectl config set-cluster kubernetes --certificate-authority=ca.crt --embed-certs=true --server=https://172.16.1.11:6443 --kubeconfig=kube-controller-manager.kubeconfig

kubectl config set-credentials system:kube-controller-manager --client-certificate=kube-controller-manager.crt --client-key=kube-controller-manager.key --embed-certs=true --kubeconfig=kube-controller-manager.kubeconfig

kubectl config set-context system:kube-controller-manager --cluster=kubernetes --user=system:kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfig

kubectl config use-context system:kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfig同步到其他节点

bash

scp kube-controller-manager* root@172.16.1.12:/etc/kubernetes/ssl/

scp kube-controller-manager* root@172.16.1.13:/etc/kubernetes/ssl/

scp /etc/kubernetes/kube-controller-manager.conf root@172.16.1.12:/etc/kubernetes/

scp /etc/kubernetes/kube-controller-manager.conf root@172.16.1.13:/etc/kubernetes/

scp /usr/lib/systemd/system/kube-controller-manager.service root@172.16.1.12:/usr/lib/systemd/system/

scp /usr/lib/systemd/system/kube-controller-manager.service root@172.16.1.13:/usr/lib/systemd/system/bash

cat > /etc/kubernetes/kube-controller-manager.conf << "EOF"

KUBE_CONTROLLER_MANAGER_OPTS=" --secure-port=10257 \

--bind-address=127.0.0.1 \

--kubeconfig=/etc/kubernetes/ssl/kube-controller-manager.kubeconfig \

--service-cluster-ip-range=10.96.0.0/16 \

--cluster-name=kubernetes \

--cluster-signing-cert-file=/etc/kubernetes/ssl/ca.crt \

--cluster-signing-key-file=/etc/kubernetes/ssl/ca.key \

--allocate-node-cidrs=true \

--cluster-cidr=10.244.0.0/16 \

--root-ca-file=/etc/kubernetes/ssl/ca.crt \

--service-account-private-key-file=/etc/kubernetes/ssl/ca.key \

--leader-elect=true \

--feature-gates=RotateKubeletServerCertificate=true \

--controllers=*,bootstrapsigner,tokencleaner \

--horizontal-pod-autoscaler-sync-period=10s \

--tls-cert-file=/etc/kubernetes/ssl/kube-controller-manager.crt \

--tls-private-key-file=/etc/kubernetes/ssl/kube-controller-manager.key \

--use-service-account-credentials=true \

--v=2"

EOFbash

cat > /usr/lib/systemd/system/kube-controller-manager.service << "EOF"

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/etc/kubernetes/kube-controller-manager.conf

ExecStart=/usr/local/bin/kube-controller-manager $KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF启动服务

bash

systemctl daemon-reload

systemctl enable --now kube-controller-manager

systemctl status kube-controller-manager5.4 kube-scheduler 部署

创建配置文件

bash

kubectl config set-cluster kubernetes --certificate-authority=ca.crt --embed-certs=true --server=https://172.16.1.11:6443 --kubeconfig=kube-scheduler.kubeconfig

kubectl config set-credentials system:kube-scheduler --client-certificate=kube-scheduler.crt --client-key=kube-scheduler.key --embed-certs=true --kubeconfig=kube-scheduler.kubeconfig

kubectl config set-context system:kube-scheduler --cluster=kubernetes --user=system:kube-scheduler --kubeconfig=kube-scheduler.kubeconfig

kubectl config use-context system:kube-scheduler --kubeconfig=kube-scheduler.kubeconfig复制文件到其他节点

bash

scp kube-scheduler* root@172.16.1.12:/etc/kubernetes/ssl/

scp kube-scheduler* root@172.16.1.13:/etc/kubernetes/ssl/创建配置文件

bash

cat > /etc/kubernetes/kube-scheduler.conf << "EOF"

KUBE_SCHEDULER_OPTS="--kubeconfig=/etc/kubernetes/ssl/kube-scheduler.kubeconfig \

--leader-elect=true \

--v=2"

EOF创建服务文件

bash

cat > /usr/lib/systemd/system/kube-scheduler.service << "EOF"

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/etc/kubernetes/kube-scheduler.conf

ExecStart=/usr/local/bin/kube-scheduler $KUBE_SCHEDULER_OPTS

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF启动服务

bash

systemctl daemon-reload

systemctl enable --now kube-scheduler

systemctl status kube-scheduler6. node节点部署

6.1 containerd安装

访问官网下载 ,选择最新版本

bash

wget https://github.com/containerd/containerd/releases/download/v1.7.23/cri-containerd-cni-1.7.23-linux-amd64.tar.gz

# 解压

tar xvf cri-containerd-cni-1.7.23-linux-amd64.tar.gz -C /创建默认配置

bash

mkdir /etc/containerd

# 生成默认配置

containerd config default >/etc/containerd/config.toml

# 下面的配置文件中已修改,可不执行,仅修改默认时执行。

sed -i 's@SystemdCgroup = false@SystemdCgroup = true@' /etc/containerd/config.toml

# 下面的配置文件中已修改,可不执行,仅修改默认时执行。

sed -i 's@registry.k8s.io/pause:3.8@registry.k8s.io/pause:3.9@' /etc/containerd/config.toml运行runc查看是否有报错

bash

[root@node01 ~]# runc -v

runc version 1.1.14

commit: v1.1.14-0-g2c9f5602

spec: 1.0.2-dev

go: go1.22.8

libseccomp: 2.5.2

[root@node01 ~]#开机自启动

bash

systemctl daemon-reload

systemctl enable --now containerd

systemctl status containerd确认socket是否正常访问

bash

crictl -r unix:///run/containerd/containerd.sock info6.2 kubelet安装

bash

# 安装之前把几个需要运行的文件拷贝到对应目录下

scp kubelet kube-proxy root@172.16.1.21:/usr/local/bin/

scp kubelet kube-proxy root@172.16.1.22:/usr/local/bin/master节点

在master节点生成证书

bash

kubectl config set-cluster kubernetes --certificate-authority=ca.crt --embed-certs=true --server=https://172.16.1.11:6443 --kubeconfig=kubelet-bootstrap.kubeconfig

kubectl config set-credentials kubelet-bootstrap --token=$(awk -F "," '{print $1}' /etc/kubernetes/ssl/token.csv) --kubeconfig=kubelet-bootstrap.kubeconfig

kubectl config set-context default --cluster=kubernetes --user=kubelet-bootstrap --kubeconfig=kubelet-bootstrap.kubeconfig

kubectl config use-context default --kubeconfig=kubelet-bootstrap.kubeconfigbash

kubectl create clusterrolebinding cluster-system-anonymous --clusterrole=cluster-admin --user=kubelet-bootstrap

kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap --kubeconfig=kubelet-bootstrap.kubeconfigbash

# 查看角色信息

kubectl describe clusterrolebinding cluster-system-anonymous

kubectl describe clusterrolebinding kubelet-bootstrap证书拷贝到node节点

bash

scp kubelet-bootstrap.kubeconfig ca.crt root@172.16.1.21:/etc/kubernetes/ssl/

scp kubelet-bootstrap.kubeconfig ca.crt root@172.16.1.22:/etc/kubernetes/ssl/node节点

创建工作目录

bash

mkdir -p /var/lib/kubelet/plugins_registry创建服务文件

bash

cat > /etc/kubernetes/kubelet.json << "EOF"

{

"kind": "KubeletConfiguration",

"apiVersion": "kubelet.config.k8s.io/v1beta1",

"authentication": {

"x509": {

"clientCAFile": "/etc/kubernetes/ssl/ca.crt"

},

"webhook": {

"enabled": true,

"cacheTTL": "2m0s"

},

"anonymous": {

"enabled": false

}

},

"authorization": {

"mode": "Webhook",

"webhook": {

"cacheAuthorizedTTL": "5m0s",

"cacheUnauthorizedTTL": "30s"

}

},

"address": "0.0.0.0",

"port": 10250,

"readOnlyPort": 10255,

"cgroupDriver": "systemd",

"hairpinMode": "promiscuous-bridge",

"serializeImagePulls": false,

"clusterDomain": "cluster.local.",

"clusterDNS": ["10.96.0.2"]

}

EOFbash

cat > /usr/lib/systemd/system/kubelet.service << "EOF"

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/kubernetes/kubernetes

After=containerd.service

Requires=containerd.service

[Service]

WorkingDirectory=/var/lib/kubelet

ExecStart=/usr/local/bin/kubelet \

--bootstrap-kubeconfig=/etc/kubernetes/ssl/kubelet-bootstrap.kubeconfig \

--cert-dir=/etc/kubernetes/ssl \

--kubeconfig=/etc/kubernetes/kubelet.kubeconfig \

--pod-infra-container-image=registry.k8s.io/pause:3.9 \

--config=/etc/kubernetes/kubelet.json \

--container-runtime-endpoint=unix:///run/containerd/containerd.sock \

--rotate-certificates \

--root-dir=/etc/cni/net.d \

--v=2

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF开机自启动

bash

systemctl daemon-reload

systemctl enable --now kubelet

systemctl status kubelet6.3 bube-proxy

master节点

创建配置文件

bash

kubectl config set-cluster kubernetes --certificate-authority=ca.crt --embed-certs=true --server=https://172.16.1.11:6443 --kubeconfig=kube-proxy.kubeconfig

kubectl config set-credentials kube-proxy --client-certificate=kube-proxy.crt --client-key=kube-proxy.key --embed-certs=true --kubeconfig=kube-proxy.kubeconfig

kubectl config set-context default --cluster=kubernetes --user=kube-proxy --kubeconfig=kube-proxy.kubeconfig

kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig复制到node节点

bash

scp kube-proxy.kubeconfig root@172.16.1.21:/etc/kubernetes/ssl/

scp kube-proxy.kubeconfig root@172.16.1.22:/etc/kubernetes/ssl/node节点

node节点创建配置文件

bash

cat > /etc/kubernetes/kube-proxy.yaml << "EOF"

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 0.0.0.0

clientConnection:

kubeconfig: /etc/kubernetes/ssl/kube-proxy.kubeconfig

clusterCIDR: 10.244.0.0/16

healthzBindAddress: 0.0.0.0:10256

kind: KubeProxyConfiguration

metricsBindAddress: 0.0.0.0:10249

mode: "ipvs"

EOFbash

cat > /usr/lib/systemd/system/kube-proxy.service << "EOF"

[Unit]

Description=Kubernetes Kube-Proxy Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

WorkingDirectory=/var/lib/kube-proxy

ExecStart=/usr/local/bin/kube-proxy \

--config=/etc/kubernetes/kube-proxy.yaml \

--v=2

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOFbash

systemctl daemon-reload

systemctl enable --now kube-proxy

systemctl status kube-proxy6.4 calico

上传到目录并进入目录,执行导入镜像

bash

for NODE in $(ls); do echo $NODE; ctr -n k8s.io i import $NODE; done安装

bash

# 安装

kubectl create -f https://raw.githubusercontent.com/projectcalico/calico/v3.28.2/manifests/tigera-operator.yaml

kubectl create -f https://raw.githubusercontent.com/projectcalico/calico/v3.28.2/manifests/custom-resources.yaml

# 卸载

kubectl delete -f tigera-operator.yaml

kubectl delete -f custom-resources.yaml

kubectl delete pod xxx -n xxx # --force --grace-period=0

查看

kubectl describe pods注意 需要编辑custom-resources.yaml文件

yaml

# This section includes base Calico installation configuration.

# For more information, see: https://docs.tigera.io/calico/latest/reference/installation/api#operator.tigera.io/v1.Installation

apiVersion: operator.tigera.io/v1

kind: Installation

metadata:

name: default

spec:

# Configures Calico networking.

calicoNetwork:

ipPools:

- name: default-ipv4-ippool

blockSize: 26

cidr: 10.244.0.0/16 # 这里修改成自己设置的service地址段

encapsulation: VXLANCrossSubnet

natOutgoing: Enabled

nodeSelector: all()

---

# This section configures the Calico API server.

# For more information, see: https://docs.tigera.io/calico/latest/reference/installation/api#operator.tigera.io/v1.APIServer

apiVersion: operator.tigera.io/v1

kind: APIServer

metadata:

name: default

spec: {}6.5 coredns

bash

cat > coredns.yaml << "EOF"

apiVersion: v1

kind: ServiceAccount

metadata:

name: coredns

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:coredns

rules:

- apiGroups:

- ""

resources:

- endpoints

- services

- pods

- namespaces

verbs:

- list

- watch

- apiGroups:

- discovery.k8s.io

resources:

- endpointslices

verbs:

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:coredns

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:coredns

subjects:

- kind: ServiceAccount

name: coredns

namespace: kube-system

---

apiVersion: v1

kind: ConfigMap

metadata:

name: coredns

namespace: kube-system

data:

Corefile: |

.:53 {

errors

health {

lameduck 5s

}

ready

kubernetes cluster.local in-addr.arpa ip6.arpa {

fallthrough in-addr.arpa ip6.arpa

}

prometheus :9153

forward . /etc/resolv.conf {

max_concurrent 1000

}

cache 30

loop

reload

loadbalance

}

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: coredns

namespace: kube-system

labels:

k8s-app: kube-dns

kubernetes.io/name: "CoreDNS"

spec:

# replicas: not specified here:

# 1. Default is 1.

# 2. Will be tuned in real time if DNS horizontal auto-scaling is turned on.

strategy:

type: RollingUpdate

rollingUpdate:

maxUnavailable: 1

selector:

matchLabels:

k8s-app: kube-dns

template:

metadata:

labels:

k8s-app: kube-dns

spec:

priorityClassName: system-cluster-critical

serviceAccountName: coredns

tolerations:

- key: "CriticalAddonsOnly"

operator: "Exists"

nodeSelector:

kubernetes.io/os: linux

affinity:

podAntiAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 100

podAffinityTerm:

labelSelector:

matchExpressions:

- key: k8s-app

operator: In

values: ["kube-dns"]

topologyKey: kubernetes.io/hostname

containers:

- name: coredns

image: coredns/coredns:1.11.3

imagePullPolicy: IfNotPresent

resources:

limits:

memory: 170Mi

requests:

cpu: 100m

memory: 70Mi

args: [ "-conf", "/etc/coredns/Corefile" ]

volumeMounts:

- name: config-volume

mountPath: /etc/coredns

readOnly: true

ports:

- containerPort: 53

name: dns

protocol: UDP

- containerPort: 53

name: dns-tcp

protocol: TCP

- containerPort: 9153

name: metrics

protocol: TCP

securityContext:

allowPrivilegeEscalation: false

capabilities:

add:

- NET_BIND_SERVICE

drop:

- all

readOnlyRootFilesystem: true

livenessProbe:

httpGet:

path: /health

port: 8080

scheme: HTTP

initialDelaySeconds: 60

timeoutSeconds: 5

successThreshold: 1

failureThreshold: 5

readinessProbe:

httpGet:

path: /ready

port: 8181

scheme: HTTP

dnsPolicy: Default

volumes:

- name: config-volume

configMap:

name: coredns

items:

- key: Corefile

path: Corefile

---

apiVersion: v1

kind: Service

metadata:

name: kube-dns

namespace: kube-system

annotations:

prometheus.io/port: "9153"

prometheus.io/scrape: "true"

labels:

k8s-app: kube-dns

kubernetes.io/cluster-service: "true"

kubernetes.io/name: "CoreDNS"

spec:

selector:

k8s-app: kube-dns

clusterIP: 10.96.0.2

ports:

- name: dns

port: 53

protocol: UDP

- name: dns-tcp

port: 53

protocol: TCP

- name: metrics

port: 9153

protocol: TCP

EOF安装

bash

# 安装

kubectl create -f coredns.yaml

# 卸载

kubectl delete -f coredns.yaml

查看

kubectl get pod -A6.6 nginx

bash

cat > nginx.yaml << "EOF"

---

apiVersion: v1

kind: ReplicationController

metadata:

name: nginx-web

spec:

replicas: 1

selector:

name: nginx

template:

metadata:

labels:

name: nginx

spec:

containers:

- name: nginx

image: nginx:latest

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:

name: nginx-service-nodeport

spec:

ports:

- port: 80

targetPort: 80

nodePort: 30001

protocol: TCP

type: NodePort

selector:

name: nginx

EOF安装

bash

# 安装

kubectl create -f nginx.yaml

# 卸载

kubectl delete -f nginx.yaml

查看

kubectl get pod -A

kubectl get all